Automatically Identifying and Fixing Single Channel Audio Defects in Stereo Audio

Author: Koen Putman

Downloads

Source

Paper

Requirements: libsndfile.

It also uses Kiss FFT(included) for the FFT.

The code is fairly simple and should build with a simple make as long as you have GCC.

The interface is fully command line based and when you run the program without any arguments it should tell you about some of the options. The code has some commentary, but should also be somewhat self explanatory in combination with the paper.

Introduction

This is the project page for my audio processing project. This will contain some of the same information as the paper as well as some audio samples. The main goal of this project was to attempt to solve several issues that only exist in a single channel of stereo audio. Some examples of the issues can be found in the sections below. All of the samples in the dataset are from internet streams from a club, so their quality varies a bit and they are all subject to copyright. As such there will be no dataset shared and this project is mostly just me solving my own audio problems. There might be something useful in here though.

Audio problems

Noise

While the recordings have some overall noise, there were several instances of very apparent white noise in a single channel while the other channel was fine. This was probably introduced by the mixer or something in the connection between the audio source and the streaming PC. While it is not very audible when the music is loud, it is definitely annoying when the amplitudes are lower. Even when it is virtually inaudible due to the relatively low amplitude, it is noticeable when you suddenly stop playing any music and take off a headset. In order to compensate for the noise you did not notice you will probably hear noise in the other ear, generated by your brain to compensate.

Broken channel

Broken audio in this case refers to one channel suddenly cutting out or becoming heavily distorted. This happens sometimes due to glitches in the streaming setup or the turntables. This is generally fixed by duplicating the channel without issues, but given that it only happens sometimes it is very annoying to locate and deal with.

Unbalanced channels

This is a more common problem and happens on virtually every recording, though usually not to a very noticeable degree. It is very annoying to listen to when the difference gets bigger though.

Clipping

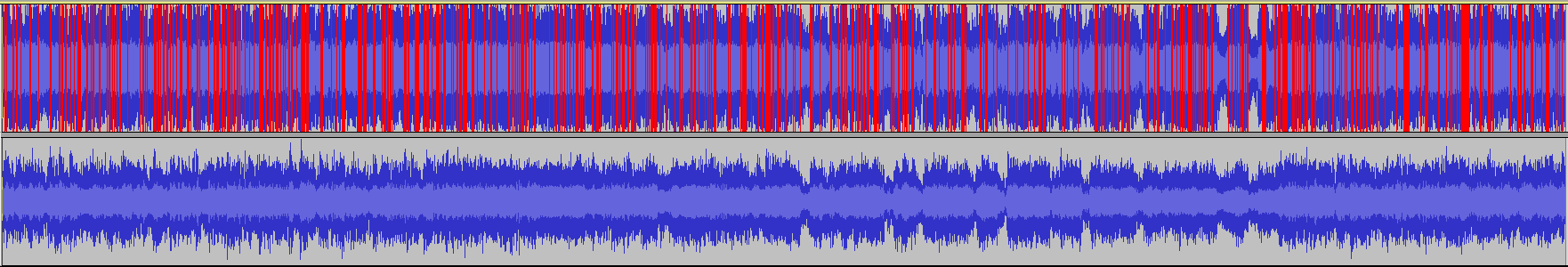

There is clipping in the fragment for unbalanced channels as is visible in the screenshot of the waveform above. This is problematic when trying to normalise because some information is lost whenever there's clipping so the balanced audio will sound somewhat off. Dealing with this would be creating something from nothing though, so it's a difficult process.

Results

This project is very much still a work in progress so the results are not amazing. There are some cases in which it performs decently though. The paper goes into more detail about what we're doing to get these results as well as describe what goes wrong and what could be improved. These are just a few samples and of course these were picked to show off spots where it's fairly effective.

Noise

The second framgment has been filtered to attempt to remove the noise. This filters is the hardest to control and doesn't work well on many samples, but there is a definite improvement and this is without any prior knowledge about what the noise would be or anything, this is noise processing without any noise profile.

Broken audio

The second fragment has been automatically fixed by replacing the left channel with the right one. This was extracted from a larger section so the transition was instant here, but this kind of problem will be solved nicely this way.

Unbalanced audio/clipping

The audio in the second framgment here is definitely more balanced, but now the right channel is slightly more dominant. These are issues that require more balancing.